Well, I don’t know really. Most programmers that I know seem about as happy as the rest of the population, but I was thinking about programming and that variation on “A Policeman’s Lot” from the Pirates of Penzance appealed to me.

Programming in often presented as being difficult and esoteric, when in fact it is only a variation of what humans do all the time. When you read a recipe or follow a knitting pattern, you are essentially doing what a computer does when it “runs a program”.

The programmer in this analogy corresponds to the person who wrote the recipe or knitting pattern. Computer programs are not a lot more profound than a recipe or pattern, though they are, in most cases, a lot more complicated than that.

It’s worth noting that recipes and patterns for knitting (and for weaving for that matter) have been around for many centuries longer than computer programs. Indeed it could be argued that computers and programming grew out of weaving and the patterns that could be woven into the cloth.

In 1801 Joseph Marie Jacquard invented a method of punched cards which could be used to automatically weave a pattern into textiles. It was a primitive program, which controlled the loom. I imagine that before it was invented the operators were giving a sheet to detail what threads to raise and which drop, and which colour threads to run through the tunnel thus formed. I can also imagine that such a manual process would lead to mistakes, leading to errors in the pattern created in the cloth. It would also be time consuming, I expect.

Jacquard’s invention, by bypassing this manual method would have led to accurately woven patterns and a great saving in time. Also, an added advantage was that changing to another pattern would be as simple as loading a new set of punched cards.

At around this time, maybe a little later, the first music boxes were produced. These contained a drum with pins that plucked the tines of a metal comb. However the idea for music boxes goes back a lot further as the link above tells.

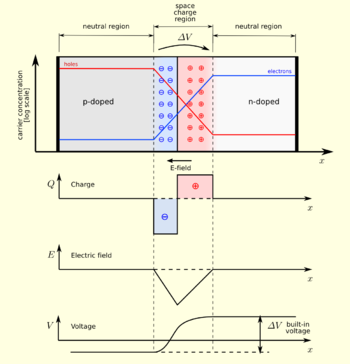

The only significant difference between Jacquard’s invention and the music boxes is that Jacquard relied on the holes and music boxes relied on pins. They operated in different senses, positive and negative but the principle is pretty much the same.

Interestingly there is a parallel in semiconductors. While current is carried by the electrons, in a very real sense objects called “holes” travel in the reverse direction to the electrons. Holes are what they sound like, places where an electron is absent, however I believe that in semiconductor theory, they are much more than mere gaps, and behave like real particles.

It’s amazing how powerful programming is. Microsoft Windows is probably the most powerful program that non-programmers come into contact with, and it does so many things “under the hood” that people take for granted, and it is all based on the absence or presence of things, much like Jacquard’s loom and the music boxes. While that is an analogy, it is not too far from the mark, and many people will remember having been told, more or less accurately that computers run on ones and zeroes.

When a programmer sits down to write a program he or she doesn’t start writing ones and zeroes. He or she writes chunks of stuff which non-programmers would partially recognise. English words like “print”, “do”, “if” and “while” might appear. Symbols that look like maths might also appear. Depending on the language, the code might be sprinkled with dollar signs, which have nothing directly to do with money, by the way.

The programmer write in a “language“, which is much more tightly defined than ordinary language, but basically it details at a relatively high level what the programmer wants to happen.

The programmer may tell the program to “read” something and if the value read is positive or is “Baywatch” or is “true”, do something. The programmer has to bear in mind that often the value is NOT what the programmer wants the program to look for and it is the programmer’s responsibility to handle not only the “positive” outcome but also the “negative” one. He or she will tell the program to do something else.

When the programmer tells the program to “read” something, he or she essentially invokes a program that someone else has written whose only job is to respond to the “read” command. These “utility” program are often written in a more esoteric language than the original programmer uses (though they don’t have to be), and since they do one specific task they can be used by anyone who programs on the computer.

http://www.gettyimages.com/detail/107885297

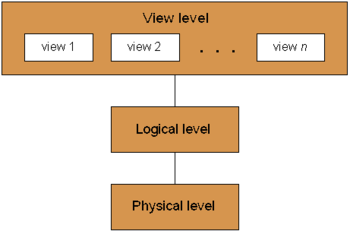

This program instructs other, lower level programs to do things for it. Again these lower level programs do one specific thing and can be used by other programs on the computer. It can be seen that I am describing a hierarchy of ever more specialised programs doing more and more specific tasks. It’s not quite like the Siphonaptera though, as the programs eventually reach the hardware level.

At the hardware level it will not be apparent what the programs are intended for, but the people who wrote them know the hardware and what the program needs to do. This is partially from the hierarchy of programs above, but also from similar programs that have already been written.

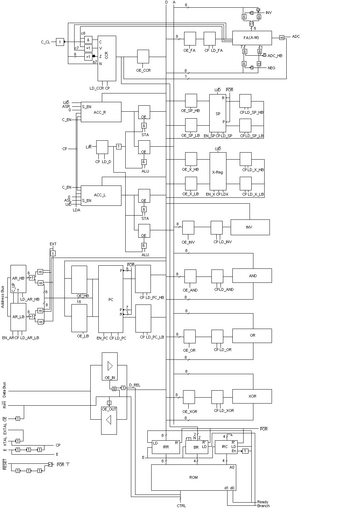

Without going into detail, the low level program might require a value to be supplied to the CPU of the computer. It will cause a number of conducting lines (collectively a “bus”) to be in one of two states, corresponding to a one or a zero, or it might cause a single line to vary between the states, sending a chain of states to the CPU.

In either case the states arrive in a “register”, which is a bit like a railway station. The CPU sends the chains of states (or bits) through its internal “railway system”, arranging for them to be compared, shifted, merged and manipulated in many ways. The end result is one or more chains of states arriving at registers, from whence they are picked up and used by the programs, with the end result being whatever the programmer asked for, way up in the stratosphere!

This is monumental achievement, pun intended, and is only achievable because at each level the programmer writes a program that performs one task at that level which doesn’t concern itself at all with any other levels except that it conforms to the requests coming from above (the interface, technically). This is called abstraction.